Last week, the FDA announced that they will be investigating a new review framework for artificial intelligence (AI) based medical devices.1 Over the past few years, the FDA actually cleared many AI medical software programs. So what is the difference between these types of AI algorithms and the ones the FDA refers to in their press release of April 2nd? Why can the latter not be handled by current review frameworks?

Artificial intelligence ≠ deep learning ≠ continuous learning

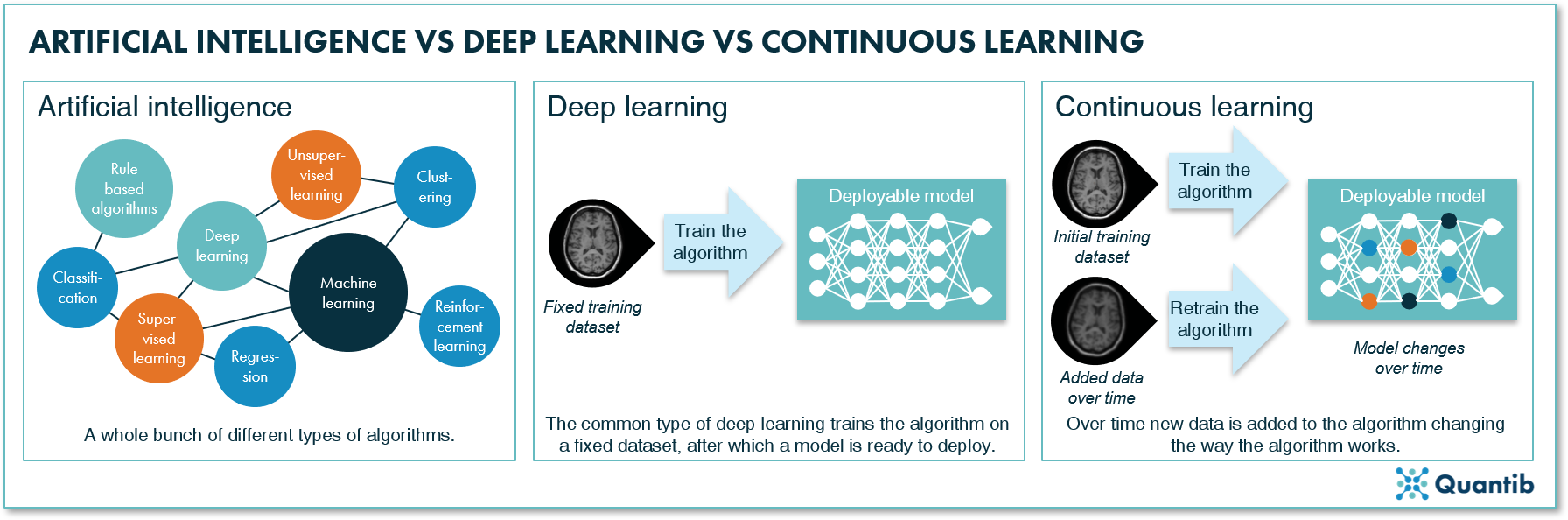

It is commonly thought that software is not really AI-based if it does not use deep learning techniques. Additionally, deep learning is often characterized as ever changing, constantly learning from new data. However, there are quite some differences between these three concepts. Understanding these differences will help us get a better grasp of the challenges the FDA is facing concerning AI software approval.

Artificial intelligence (AI)

In its very basic form, artificial intelligence is nothing more than computer programs simulating intelligent human behavior. This can be very simple, for example, an algorithm that selects all patients that had breast MRI scans and lines them up for the radiologist to assess - something you could have done manually, but a computer is also very well equipped to perform that task. More advanced AI methods are able to go a step further and detect patterns humans cannot distinguish. Such as in radiogenomics, where a database containing genetic information and images is available, allowing the training of an algorithm that can provide genetic information of a patient based on medical images alone.

Deep learning

Within all methods covered by AI, there is a subset that is called deep learning. Deep learning methods can solve all sorts of problems. The differentiating factor of deep learning methods is that these techniques use an algorithm that is more or less inspired by the structure of biological nervous systems, i.e. neural networks. If you are curious how these algorithms work, read more about it in The Ultimate Guide to AI in Radiology.

Continuous learning

An Al algorithm is said to be “continuously learning” if the user feeds new data into the algorithm while using it and the algorithm uses this data to update itself. That is, the algorithm is installed in the hospital and used to analyze images, meanwhile each image that is interpreted by a radiologist is used to further train the algorithm. The opposite of continuous learning algorithms are “locked” algorithms that are trained offsite. Not until after training is completely finished and the algorithm performance is tested, are they installed in a hospital for deployment. The new review framework that is developed by the FDA is focusing on continuous learning.

Figure 1: Artificial intelligence can be seen as a catch-all term. Deep learning is a set of methods within the field of artificial intelligence using deep neural networks. Continuous learning is a specific technique that allows you to continuously add data to the network to keep training it.

Figure 1: Artificial intelligence can be seen as a catch-all term. Deep learning is a set of methods within the field of artificial intelligence using deep neural networks. Continuous learning is a specific technique that allows you to continuously add data to the network to keep training it.

Continuous learning has two clear benefits. 1) It enables you to put newly acquired data to work as fast as possible. In other words, the algorithm will improve every time new data gets added. 2) The algorithm will adjust itself to the population and imaging protocols of the hospital where it is installed. As the data used to train the algorithm will consist of an increasing number of scans acquired in the institute that is using it, the output will be more and more “personalized” for this institute.

On the other hand, there are quite some challenges we face upon implementation of continuous learning software. How do we ensure the algorithm does indeed get better with every image that gets added? How do we implement a seamless data flow? And with adjustment to specific institutions, algorithm results will deviate between institutions. How do you make sure that this is not a problem?

So how will the FDA deal with continuous learning algorithms in AI medical software?

Some of these challenges can be and, frankly, need to be addressed by the FDA - especially when it comes to guaranteeing algorithm performance. In their press release, the FDA communicates four angles they want to include in their new review framework for continuous learning AI algorithms in the healthcare industry. Currently, these are only outlines (nothing is set in stone), but the following aspects are on the wish list:

-

The company’s adherence to quality standards

The FDA wants to clearly outline expectations they have for a company’s quality system and what good machine learning practices a company should adhere to. -

A pre-market device review to assure safety and effectiveness

Before a company can enter the market with its software, the FDA wants to do a pre-market check on safety and effectiveness. Additionally, they want to make sure it is clear for the company what the risks throughout the whole life cycle of the software are. The company has to provide a plan that explains how it will control the continuous modifications to the algorithm while assuring that the algorithm stays safe and effective for the patient. -

A monitoring system for the device during its lifetime

Companies should always monitor the development of the device and check whether the algorithm modification adheres to the previously discussed plan. If this is not the case, a new review by the FDA may be required. -

A real-world performance measurement system

As the algorithm changes constantly, the performance should also be measured regularly. The FDA requires the company to have a system in place that can measure algorithm performance on (at least) a regular basis.

But what will the FDA actually do next when it comes to their AI medical software related regulations?

The FDA will further detail their plans. Actually, they reach out to the community to help them do so. They have published a briefing document on their “Proposed Regulatory Framework for Modifications to Artificial Intelligence/Machine Learning (AI/ML) – Based Software as a Medical Device (SaMD)”. The document concludes with a list of 18 questions to gather feedback as input for their framework development.2 Are you eager to contribute to the new review framework the FDA is working on? Check out their discussion paper!

Bibliography

- Caccomo, S. Statement from FDA Commissioner Scott Gottlieb, M.D. on steps toward a new, tailored review framework for artificial intelligence-based medical devices. (2019). Available at: https://www.fda.gov/NewsEvents/Newsroom/PressAnnouncements/ucm635083.htm. (Accessed: 3rd September 2019)

- Proposed Regulatory Framework for Modifications to Artificial Intelligence/Machine Learning (AI/ML)-Based Software as a Medical Device (SaMD)-Discussion Paper and Request for Feedback. 1–20