The ultimate guide to AI in prostate cancer

By Ory Six, MSc, Wouter Veldhuis, MD PhD, UMC Utrecht and Prof. O. Akin, MD, Memorial Sloan-Kettering Cancer Center

You can’t open a magazine, newspaper or visit an online outlet without coming across articles covering in some way or another the topic of artificial intelligence (AI). In essential fields, such as healthcare, AI shows enormous potential, with an endless list of possible AI applications. Within this space medical image analysis may very well be a front runner. Research on AI algorithms processing MRI scans, CT images and outputs of other imaging modalities has exploded over the past years. Additionally, many companies are working on bringing these algorithms to hospitals as software approved for clinical use. Other AI applications that come to mind are AI-powered Da Vinci robots, hospital databases that get smarter in organizing and analyzing data because of AI or imaging holograms supporting surgeons during challenging surgeries.

What are the possible applications of AI in the context of prostate cancer management? With 1.3M new cases every year worldwide, prostate cancer is one of the most common cancers, for which AI could make a real impact in patient care.

To inform the urologist, radiologist, radiotherapist, pathologist, oncology nurse and all other physicians involved in the prostate cancer pathway of opportunities and challenges for AI in this area, we have developed The Ultimate Guide to AI in Prostate Cancer. Learn about the AI basics: how does it work and what are the limitations? Read about possible AI applications in the diagnostic workflow: how can AI help radiologists read prostate MRIs? What type of support can pathologists expect from algorithms? Discover ways in which AI can advance treatment planning and procedures: what are the options to improve radiotherapy for prostate patients using AI? How can AI bolster the surgeon in the operating room?

A brief intro to AI

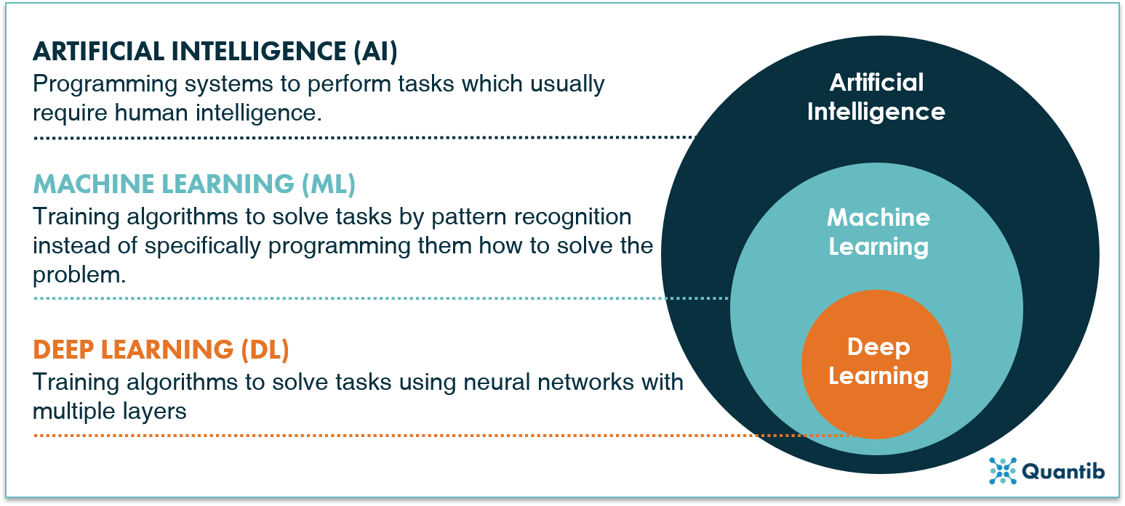

Artificial intelligence (AI) covers an extremely wide field of computer methods. Two terms heard often in connection to AI are machine learning and deep learning. Are these the same? No. Do they overlap? Yes. Machine learning (ML) is a subfield of AI (all ML methods are AI methods, but not all AI methods are ML methods). Much like this, deep learning (DL) is a subfield of ML (all deep learning is machine learning, but not all machine learning is deep learning). Figure 1 gives a schematic representation of the overall field.

Figure 1: Artificial intelligence has machine learning as a subfield, of which deep learning yet again is a subfield.

First, how does artificial intelligence work?

AI supports, or, in some cases, even replaces humans in performing certain tasks. There are many examples throughout society, also in the field of healthcare, the applications are abundant. Then how should we understand AI in this context? How can we define AI in healthcare?

AI concerns computers copying human behavior, or, at least, aiming to simulate humans in their intelligent tasks. In a healthcare context, we could then phrase a definition something like this:

“Artificial intelligence is a branch of computer science concerning the simulation of intelligent human behavior in computers to solve problems in the healthcare space.”

The applications can vary widely. AI algorithms can dig through patient databases figuring out patterns and predicting the course a disease will take, or even act as surgical robots that perform surgeries all by themselves. But these systems can also do other things like help send out reminders to people who might otherwise forget about their appointments, or support education of medical specialists keep them up to date with the latest developments in a medical field.

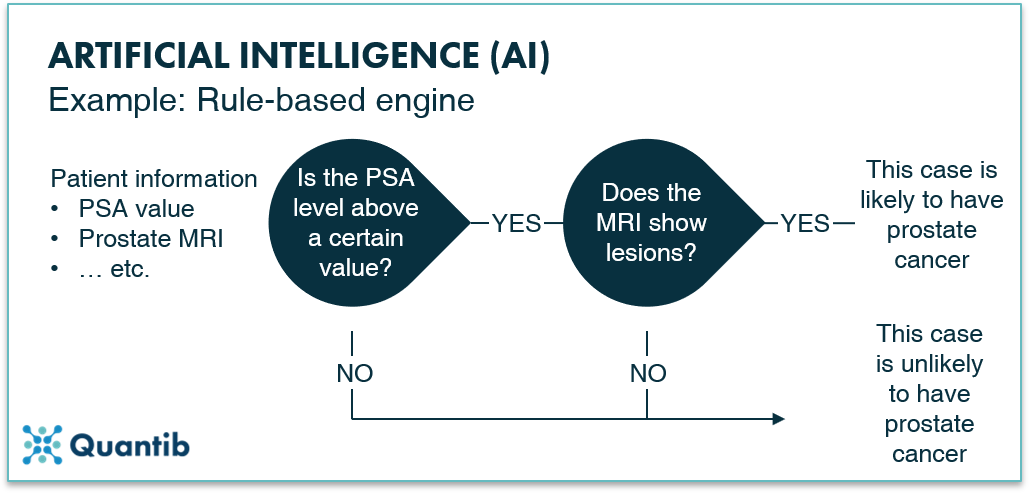

A concrete AI example: rule based engines

An example of a method that is artificial intelligence-based but does not belong to the ML and DL methods is a rule-based engine. The principle of this method is extremely simple. The algorithm just answers a list of questions with a set option of answers. For example, determining whether a patient is likely to have prostate cancer could go like this: Is the PSA value above or below a certain value? Above. In that case, ask question two: does the MRI show any lesions - yes or no? Yes. In that case, ask question three… etc. The algorithm can ask as many questions as you like, with a known set of answers to each question. After going through the whole list of questions, the computer will return a final answer to the overall problem: does this patient have prostate cancer? It is quite similar to an automated medical protocol.

Figure 2: An example of an artificial intelligence algorithm: a rule-based engine.

What is machine learning and how does it work?

There is a wide range of different methods that fall within the realm of machine learning; some of them belong to the field of deep learning, but we will get to that in a next section. Let us first dive into the different types of methods that are machine learning, but not deep learning, to get an idea of the different problems machine learning can solve.

The difference between classification, regression, and clustering methods

Machine learning algorithms can assist us in many ways. To get a sensible grasp of how exactly they do so, this section explains three types of methods: classification algorithms, regression algorithms, and clustering algorithms.

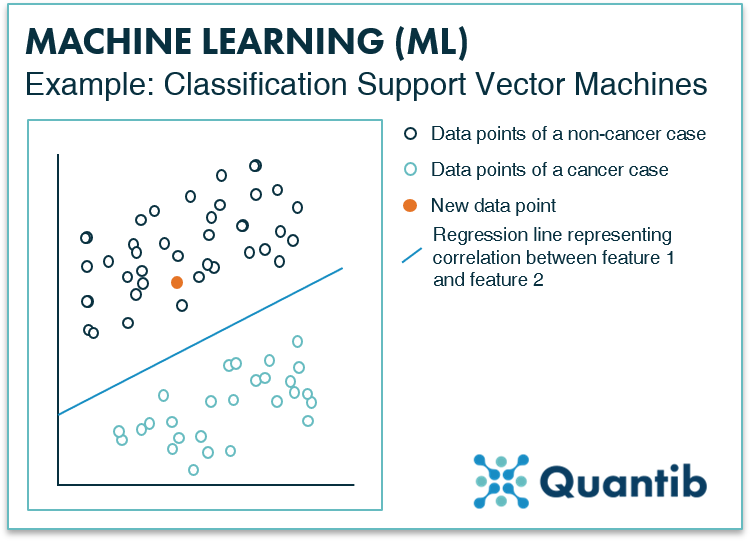

Classification methods assign classes to the input they get. So, an algorithm that would tell whether a patient has prostate cancer (yes or no) based on, for example, PSA values and some other inputs such as age, estimated prostate volume, and other clinical variables, is considered a classification algorithm. In addition, an algorithm that provides a PI-RADS scoring for a prostate MRI falls within this category as well. A specific example of such a method is called a Support Vector Machine.

Figure 3: What a Support Vector Machine, or SVM for short, does is look closely at the training data and determine what features make the difference between one class (a non-cancer case) and the other (a cancer case). When represented in a graph, this looks like figure 3, where the SVM has determined a line (blue) that represents the border between the cancer and the non-cancer cases. For a new case, represented by the orange dot, it can then easily be checked to which class it belongs by placing it in the same graph and observing on which side of the line it ends up. If interested in a more detailed description of how this method works, please visit The Ultimate Guide to AI in Radiology.

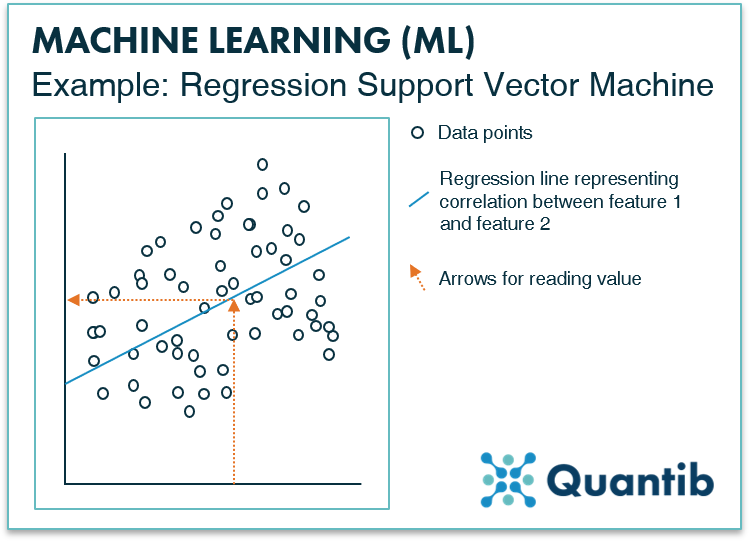

Regression methods determine a continuous value based on the input. Basically, it determines a function that outputs a value for the given input value (or input values). An example could be a risk score for prostate cancer based on the PSA value (e.g. the chance of prostate cancer is 30% based on a PSA level of 6.2ng/ml). Also, for regression problems, SVMs are often used.

Figure 4: Similar to the SVM for classification problems, a regression SVM works with a line that is determined based on a dataset with known input and output values. As can be seen in the figure, a regression SVM does not work with different classes, it simply defines the line that matches the relation between the input values (on the x-axis) and the output values (on the y-axis) best. For every new case, you can simply check what output value goes with which input value. E.g. which Gleason score would relate to a certain PSA value. A more extensive explanation of this algorithm in a regression context can be found in The Ultimate Guide to AI in Radiology.

Clustering problems are relatively similar to classification problems. A clustering algorithm also classifies inputs in different groups. However, classification algorithms are usually trained in a supervised manner, i.e. with ground truth available. Clustering methods, on the other hand, are trained in an unsupervised way, meaning the algorithm itself must find a way to group inputs into classes that make sense. In healthcare, this is not a widely applied method, because you normally have a very good idea of what you want the output of your algorithm to be. It hardly ever happens that you want the computer to figure out a classification on its own. An example you can think of is defining new prostate cancer subtypes by training an unsupervised algorithm on a genomic database.1 Are you curious to see a more detailed explanation of the workings of a clustering algorithm? Visit The Ultimate Guide to AI in Radiology page for a description of a k-means clustering method.

Neural networks - how do these fit in?

Artificial neural networks (let us abbreviate them to NN from now on) are a specific type of method that can do many different tricks. NNs are very suited to solve classification problems, but also for calculation of a continuous value (i.e. a regression problem) or to perform clustering-like tasks. These networks are very good at finding patterns humans cannot easily see with the naked eye or when there are no clear cut rules that define or quantify these patterns (for example, subtle change in the shape or in the size of a lesion or the heterogeneity within a lesion). Hence, you can just present the computer with a dataset and a ground truth and have it figure out the relationship between those on its own, without giving it too many directions.

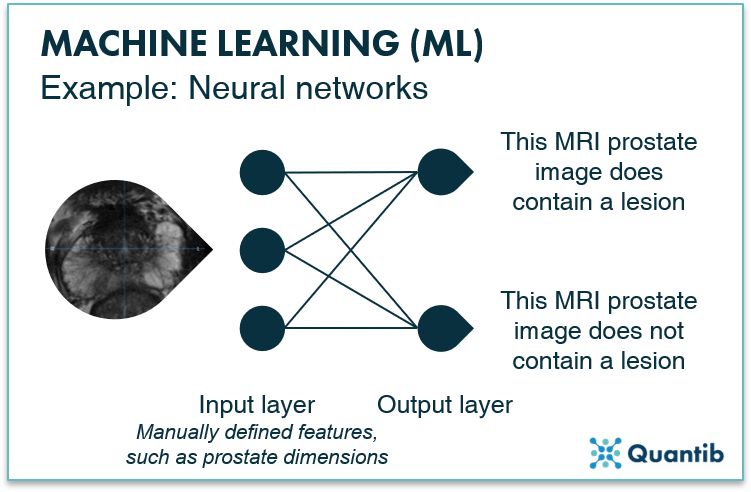

How do NNs work? An NN is often represented by an image similar to figure 5. As you can see, it consists of “layers” - an input layer and an output layer - both made up of nodes. The nodes of the input layer receive the input data (in our case a prostate MRI) and then calculations are performed before passing on the results of these calculations to the output layer. The output layer tells us the answer to the problem the neural network is supposed to solve. In the case of the network in figure 5, this would be whether the MRI prostate image contains a lesion or not.

Figure 5: An example of a machine learning algorithm: an artificial neural network (with no hidden layers).

Then how does deep learning work?

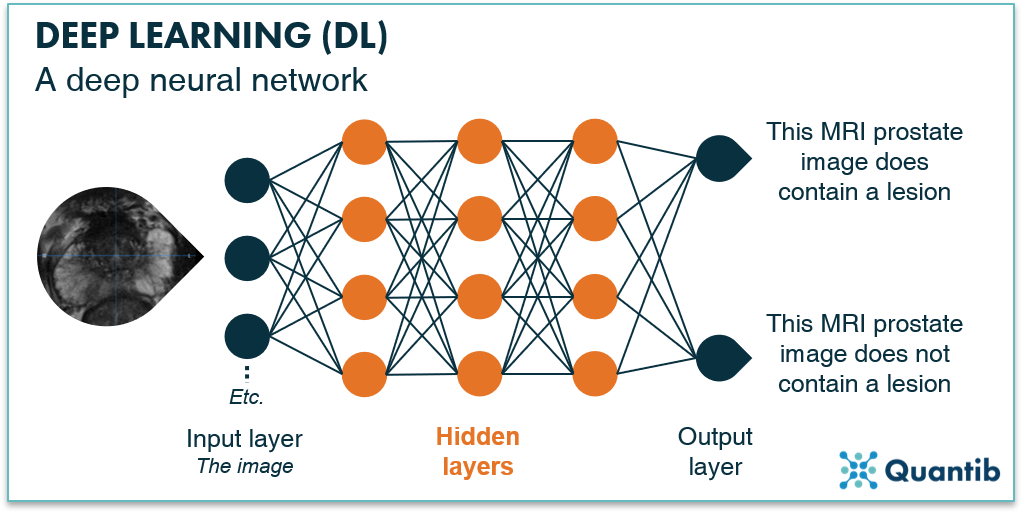

The last set of methods we will discuss all belong to the field of deep learning, which is a subfield of machine learning. The idea of deep neural networks is very similar to the NN we discussed before, with the little addition that it does not just exist of two layers, but contains one or more additional hidden layers between the input and output layer (see figure 5). These hidden layers are also made up of nodes and usually focus on detecting specific features in the image. For example, the first hidden layer typically looks at more high level features such as edges in the image, which can help to locate larger organs, Hidden layers further down in the network combine this information, and can thereby focus on the more detailed aspects such as determining whether suspicious tissue is actually BPH. To assess all these features, hidden layers perform extra calculations, offering the possibility of solving more complex problems with such a network compared to a non-deep-learning NN. If you are curious to learn more about how exactly neural networks work and how these calculations take place inside a node, check out our more extensive page on deep learning.

Figure 6: A deep neural network with an input layer, multiple hidden layers and an output layer. The neural network is trained to answer the question “Does this prostate MRI contain a lesion?”

AI and the prostate cancer pathway

After having addressed the workings and possibilities of AI methods in the previous sections, we can now look at what AI can do for the prostate cancer pathway. There are many options to apply artificial intelligence to diagnosing, treating, following and predicting the course of prostate cancer. In this section, we will discuss a wide variety of the ways in which AI can support physicians in improving results for prostate cancer patients.

A quick overview of the prostate cancer pathway

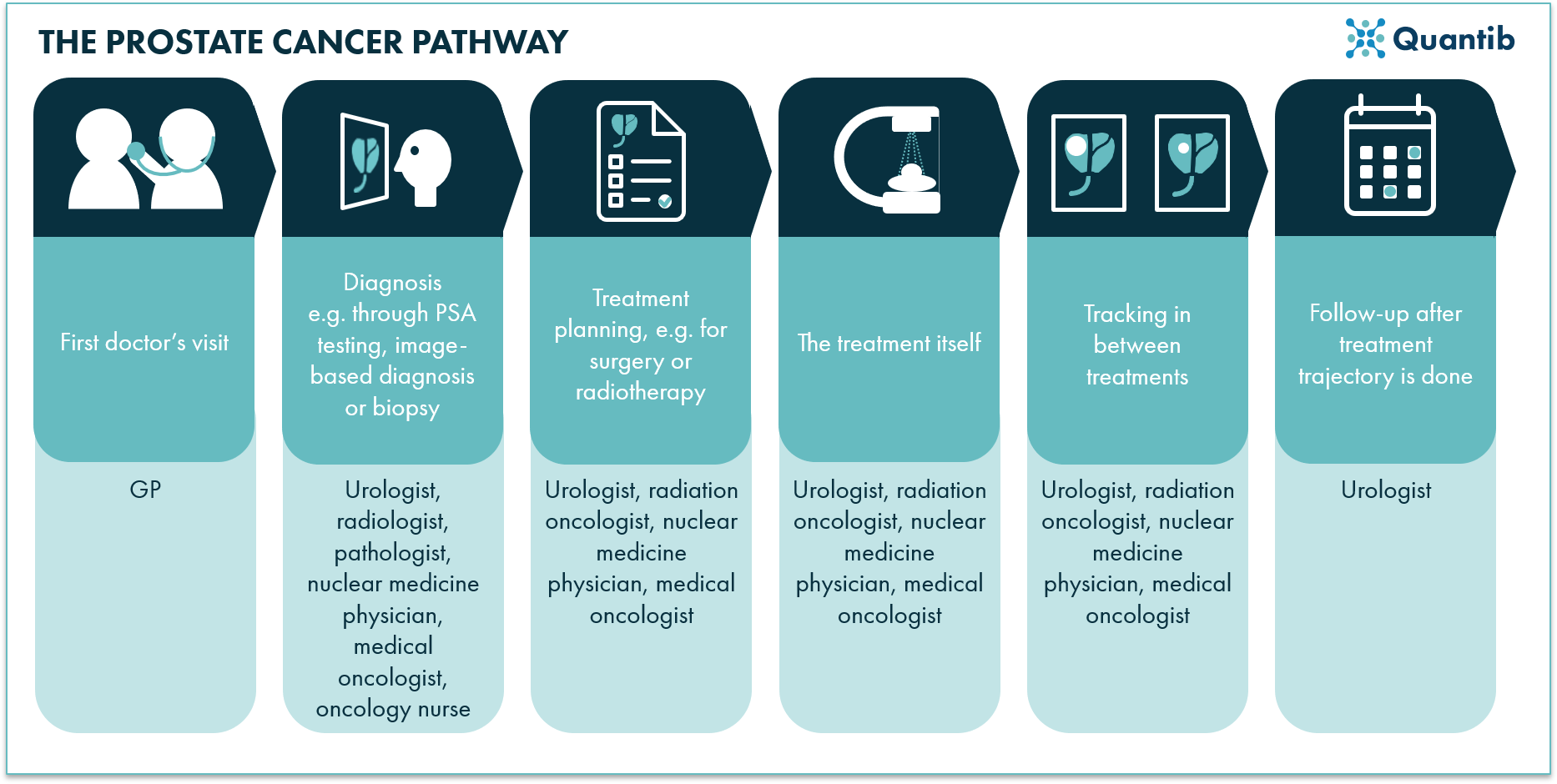

Before we turn to the application of AI in prostate oncology, a brief overview of the most common steps in the prostate cancer pathway will be discussed. This will be a convenient framework for the discussion of all the different ways that AI can support the prostate cancer pathway.

The first step is usually a visit to the general practitioner, for example because the patient suffers from pain during urination or from frequent urges to urinate. The GP refers the man to a urologist who starts the hospital-based diagnosis trajectory, including measuring prostate-specific antigen (PSA) levels, increasingly including an MRI exam, and pathology testing. After prostate cancer has been diagnosed, a treatment plan is made. This can be a more direct approach, usually radiation therapy or surgery), or a watchful approach, the active surveillance pathway.

Figure 7: A schematic overview of the prostate cancer pathway showing all steps from the initial doctor’s visit to the treatment process.

This short description already shows that many clinical actions are possible when a patient with a prostate cancer suspicion enters the hospital - many steps in which AI can possibly play a role. Below, we will investigate the options and elaborate on the possibilities for AI.

Initial steps in diagnosis

A GP may already perform a digital rectal exam (DRE) to investigate the prostate through palpation. If indicated, he or she may also order the PSA test. Both can also be performed by the urologist at the hospital the patient is referred to.

A PSA test is by far the most common initial exam for prostate cancer detection. It is a simple blood test checking for a protein produced in the prostate gland. Higher PSA levels indicate a higher chance of prostate cancer being present. Research (and experience!) has proven the assessment provides results with good specificity (>90%), however, the reported sensitivity is not exactly impressive (~20%).2

Hence, performing solely a PSA test would lead to many false negatives.

A slightly more “sophisticated” way to report on PSA is by calculating the PSA density - the PSA value divided by the volume of the prostate - resulting in a value per volume (this attempts to correct for the variation in prostate gland size). However, this does require the determination of the prostate volume. This can be estimated through a DRE; however, image-based volume determination offers a more precise measurement.3

PSA testing and AI

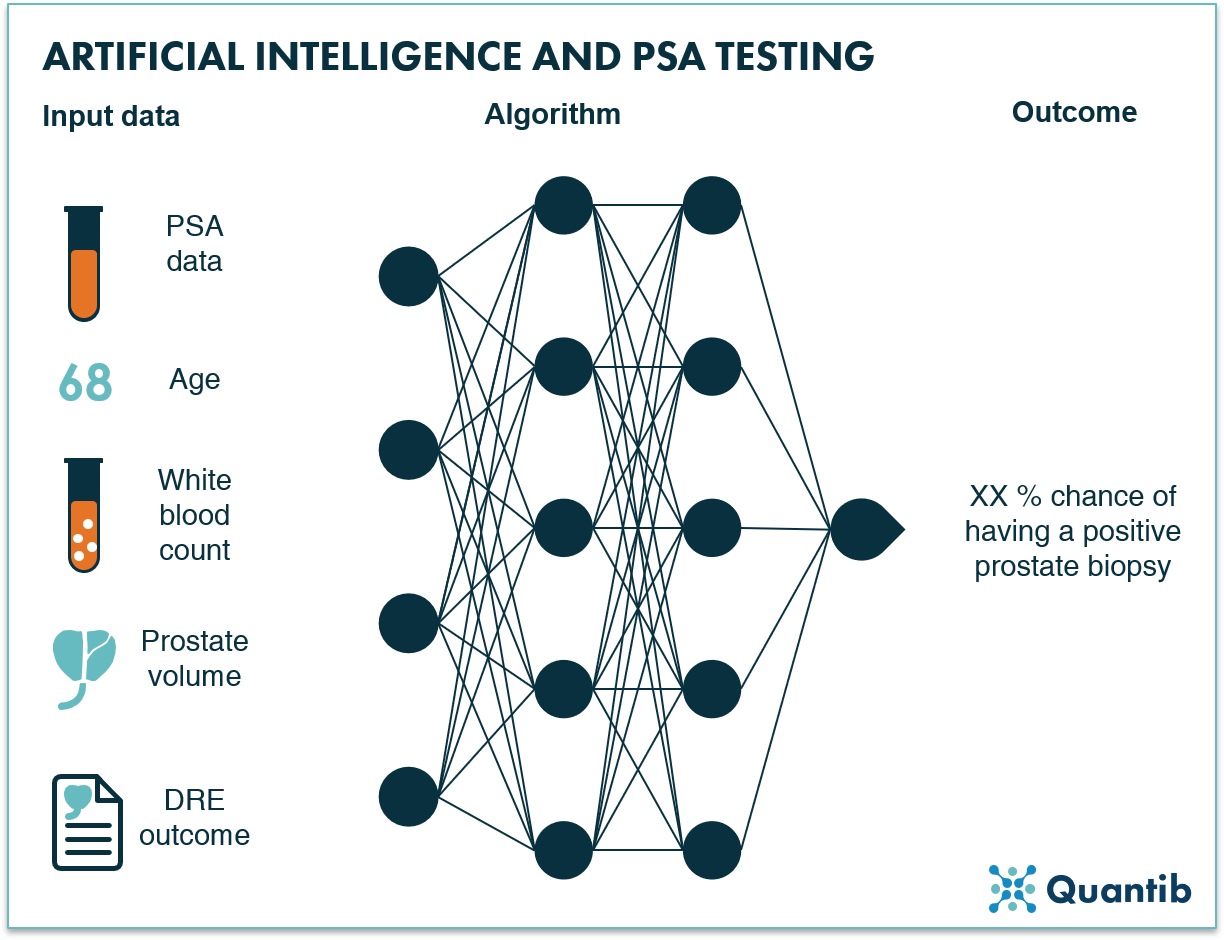

PSA testing is a simple “biological” exam, limiting the options for AI deployment. Determining the PSA level in a patient’s blood is done through a laboratory test. As this is a chemical process, the options of deploying AI in the very process of PSA level determination are limited. The way in which AI can help is by using the PSA value as an input to an algorithm and determining the risk of having a positive prostate biopsy. Such algorithms often include other input data besides PSA level like, for example, patient age, white blood count in urinalysis, (estimated) prostate volume, and the status of the digital rectal examination (DRE). Results show that just by including this extra patient information and training a neural network to become an expert in classifying prostate cases, increased sensitivity versus a regular PSA test can be achieved.4

Figure 8: An AI algorithm can use multiple inputs from the early diagnosis stage, such as PSA data, patient age and prostate volume, to determine the chance of a positive prostate biopsy.

Imaging of prostate cancer

Image-based diagnosis of prostate cancer is less standard than a quick PSA test, however, it is increasingly leveraged for prostate cancer diagnosis - especially since guidelines were adjusted by the European Association of Urology (EAU) and the American College of Radiology (ACR) imaging for prostate cancer diagnosis, prostate MRI is taking flight.5,6

In this section, we will discuss multi- and biparametric MRI, (transrectal) ultrasound, and PET imaging more extensively. Additionally, we will touch briefly upon other imaging modalities which are less used in the prostate cancer pathway.

Multiparametric and biparametric MRI

Recently, EAU and ACR adjusted their guidelines for prostate cancer diagnosis, stating that it is advised to acquire an MRI before taking a biopsy, instead of merely post-biopsy for triaging. What does MRI in prostate cancer diagnosis entail exactly? A patient exam takes anywhere from 20 to 45 minutes, during which multiple sequences are acquired: a T1w scan, a T2w scan, a DWI scan, and, in case of multiparametric-MRI (mpMRI), a DCE scan.7

After image acquisition, a radiologist evaluates the obtained scans by performing anatomical measurements of the prostate (dimensions and volume), calculations of PSA density, and, of course, the assessment of the MRI scans - often using a dedicated hanging protocol including T2w, DWI and ADC maps, and a DCE scan (if available). During assessment of the peripheral zone, the DWI, and the derived ADC, are leading. The T2w scan serves as additional input in case of doubt. The transitional zone is primarily assessed on the T2w scan, with the DWI as extra input, if necessary. The DCE scan can be of additional value by confirming suspicious areas observed on T2w or DWI. All derived information is input for the determination of a PI-RADS score - the most commonly used classification system for the interpretation and reporting of prostate MRI.8 Lastly, a radiology report is created to communicate findings to the urologist and, if relevant, to be discussed in a multidisciplinary team meeting.

Figure 9: A schematic overview of the radiology reading process of prostate mpMRI scans.

MRI supported by AI

As the prostate MRI reading process is relatively complicated and involves many steps, it offers a wide range of opportunities for the application of AI. What if AI can help novice radiologists assess the complex mpMRI exams at the same level as seasoned experts? What if AI could automate cumbersome steps in the process to speed up the reading workflow? Or improve reproducibility while and detect subtle changes on active surveillance monitoring? These are value additions that are worth investigating.

AI for automation of volume measurements and PSA density calculation

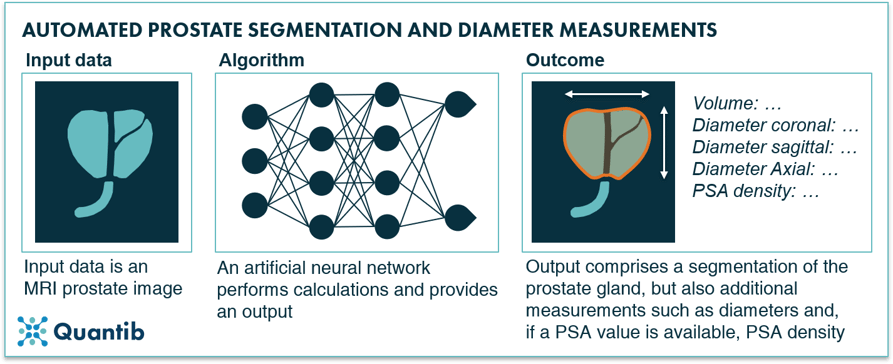

There are several ways to measure the volume of the prostate gland using an MR image. Mostly, radiologists perform a manual measurement on the T2w scan of the prostate in three directions, followed by an estimation of the volume using an ellipsoid volume calculator.9,10 However, as the used terminology suggests, this provides an estimate, not a precise value, as hardly any prostate has the exact shape of an ellipsoid. More accurate volumetry would require segmenting the prostate gland on each MRI slice separately, a method that can be performed manually, however, one can imagine this would be a cumbersome and highly time consuming task. It is, therefore, not relevant in day-to-day clinical practice. In this setting, only automation would make such a measurement accessible, as it allows for an essential increase in both speed and accuracy, and, therefore, a reduction in radiology workload.

Many research groups around the world have investigated automated segmentation of the prostate gland using AI-based algorithms. It is most definitely feasible, but as it turns out, technically hard to get right in real life practice. As a result, studies on machine learning approaches have not reported unambiguous success. Reports go from “automated segmentation […] are not always representative for the prostate anatomy” to “good segmentation performance” in research settings.11,12 Deep learning approaches, have shown more promising results with DICE scores (a measurement for the overlap between the algorithm’s output and a ground truth) in the range of 80-90%.13–20 If the segmentation results are required to be more accurate, it would be possible to provide the user with a user-interface so he/she can manually adjust the initial segmentation as seen fit. This approach would allow for faster adoption of AI in clinical practice, as it avoids waiting until research results with deep learning algorithms show accuracy scores that are nearing 100%.

Figure 10: An AI algorithm can segment the prostate gland based on a prostate MRI scan and provide additional measurements such as the prostate gland, diameters in three dimensions, and PSA density (if PSA value is available).

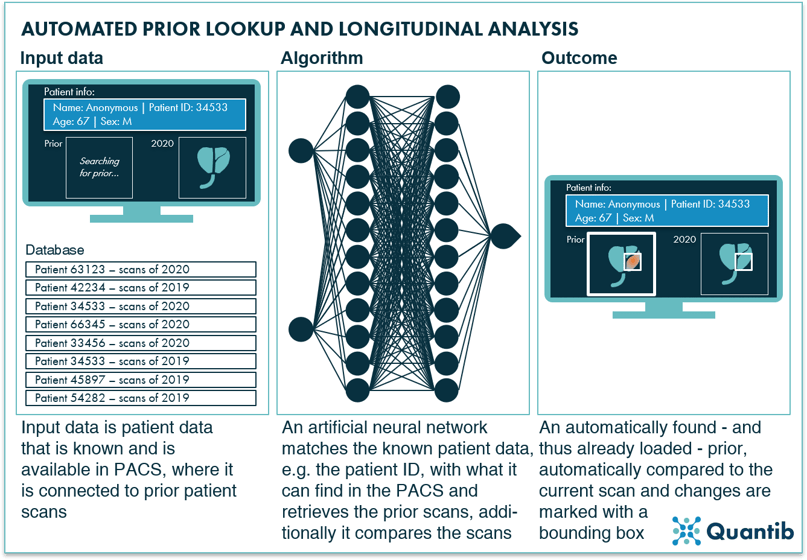

AI for automated prior lookup and longitudinal comparison

A possible step in the prostate mpMRI reading process is the lookup of prior exams and comparing these to the most recent exam. They are available in many cases and are, as with all of radiology, having a comparison is often a valuable source of information. Automating the recovering and loading this data (including, for example, information on prior surgery, applied radiation therapy) would be a nice to have, however, being able to compare an older scan to the most recent one and detect subtle changes would be considerably more valuable. AI-supported longitudinal comparison would help radiologists to identify the smallest differences between scans, that would otherwise go undetected.

Figure 11: AI algorithms are very well suited for the smart matching of specific information to large databases, to locate matches such as a prior prostate MRI scan and compare these priors to current images to detect subtle changes.

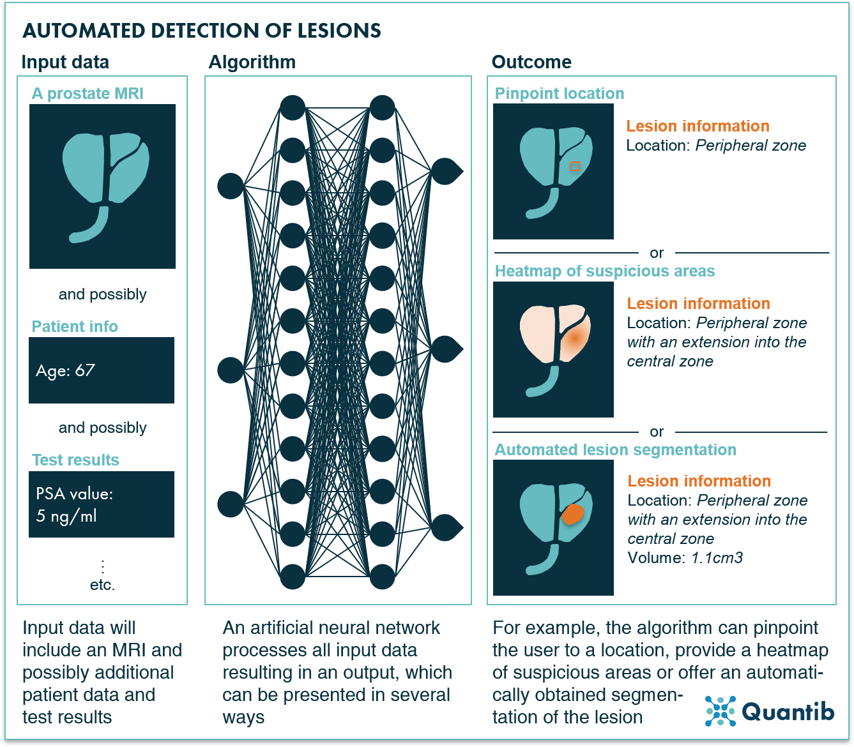

AI for prostate lesion detection and classification

As prostate lesion detection and characterization has proven a challenging task mostly reserved for the experts in the field,21,22 the potential value AI could offer by supporting the radiologist with scan assessment is substantial. In this context, both machine learning and deep learning methods have been investigated extensively.

The simplest approach consists of pointing out lesion locations in the prostate gland without segmentations or any other type of measurements. The suspicious areas are simply indicated by a box drawn around them or pointed out with an arrow or a dot.

Another option is to present the results as a heatmap or a probability map. The full prostate gland can be shown with an overlay highlighting suspicious areas with a different color from the rest of the organ.23 A major benefit of this approach is that the user has full control over the final segmentation. The heatmap can be used to determine this lesion segmentation more accurately, but the software itself does not get to a final diagnosis, i.e. take the doctor’s place. From the AI company’s point of view, this leads to another advantage, namely, the fact that regulatory bodies are not big fans of software pretending to be doctors. Getting regulatory approval for AI software that provides input for a diagnosis is a lot easier. At the same time, the required interaction of the radiologist can also be a downside - the method is not fully automated, and the radiologist still needs to invest time to determine if a lesion present, locate lesion boundaries, determine volume, etc.

Lastly, there is full blown segmentation. In this case, the algorithm provides a segmentation of all lesions it can detect in the prostate gland.24,25 This segmentation can be fully automated, but at the same time, it can still allow for manual adjustments in case the reading radiologist feels improvements are needed. For further reading, Dai et al. included a long list of their literature review results in their 2019 paper on both prostate gland and lesion segmentation.26

Figure 12: AI algorithms are able to detect prostate lesions using a prostate MRI exams and optionally additional inputs as input data.

An interesting research example to mention is the deep learning approach used by Zhao et al. They evaluate the influence of using different image features on algorithm performance. Investigated features include, for example, a value for the overall brightness of the image, and a measure for the gray level distribution. This can be useful input if you are working on an algorithm to determine whether a case has prostate tumors, or if you want to create a lesion segmentation algorithm. The determined features can be great indicators for relevant voxels to assess.27

After detection, the first question is, of course, “Is this a clinically significant lesion?” Several research groups have investigated the ability of machine learning algorithms to answer this question. For example, Bernatz et al. compare the application of several ML approaches to ADC maps and conclude that results vary. Including AI algorithms in the diagnostic workflow can improve or reduce diagnostic performance.28 Bonekamp et al. found an improvement in clinical assessment upon adoption of a radiomic machine learning approach during reading.29 Taking a step back and looking at different combinations of several MRI sequences and the extent of the role they (can) play in a deep neural network trained for classification of prostate lesions, Aldoj et al. found that perfusion MR images are actually strongly leveraged by the algorithm, just as the DWI and ADC maps. Interestingly, the T2w scan had the least effect on algorithm performance.30

Incidentally, many deep learning algorithms are designed in such a way that the detection and classification are combined into one step. Most of these approaches just highlight clinically significant lesions and ignore, for example, benign prostatic hyperplasia (BPH).31 Computers have reached similar performance as regular clinical PI-RADS scoring, so this is an idea worth investigating further.32

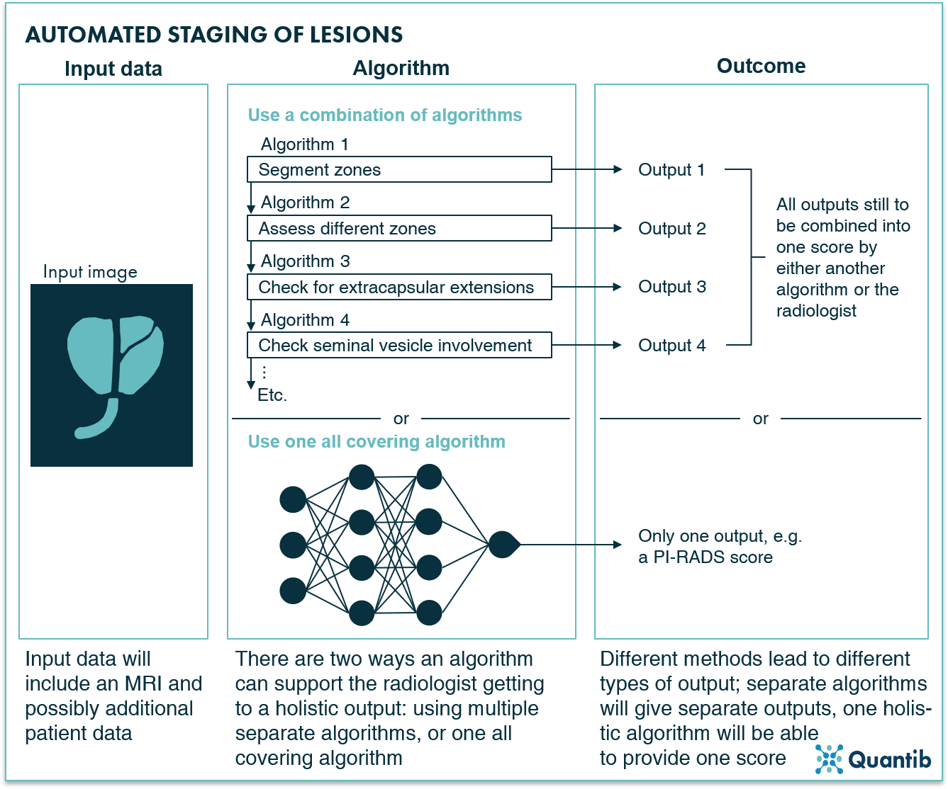

AI for PI-RADS scoring and tumor staging

Important input for a treatment decision is the PI-RADS score, after which the stage of the detected lesions is determined. Setting a PI-RADS score can be supported by AI in two ways. Firstly, all sub-steps a radiologist usually takes towards setting a PI-RADS score can be covered by a separate algorithm. This means the software (i.e. combination of all these algorithms) will output several input components needed to get to a final score. Determining the final score is up to the radiologist. Such an algorithm can, for example, segment the prostate into its different zones. As the assessment for PI-RADS scoring strongly depends on the zone you are looking at, defining where each zone starts and ends is highly relevant as a first step towards a PI-RADS classification.13,33–35 Another example is an algorithm that detects suspicious areas in a specific zone.36,37

Secondly, we can take the “shortcut” by training an algorithm that outputs the PI-RADS score directly.38 This second route may sound tempting, but is challenging to get approved by the regulatory bodies for clinical use. For this reason it is not an approach that is commonly pursued by AI radiology companies. A slightly more advanced version of such an algorithm has gotten significantly more attention in academia. This type of algorithm does not output a PI-RADS score, but a pathology-based Gleason score. Hence, the algorithm was trained to connect MR images with the Gleason score that was eventually determined using tissue samples. This means that, in theory, biopsy results could become available without ever taking a biopsy - minimizing the risk of complications.39–41 The feasibility of such an algorithm is confirmed once again by Winkel et al. who showed that supervised machine learning algorithms are able to output a score that demonstrates stronger correlation with Gleason scores than visually determined PI-RADS scoring.42

Figure 13: AI algorithms can determine the staging of lesions based on a prostate MR image in two ways: by deploying a separate algorithm for each characteristic that is relevant for lesion staging (leaving the setting of the PI-RADS score to the radiologists or an additional algorithm) or by leveraging an all-covering algorithm that will output the PI-RADS score directly.

Eager to see Quantib's AI driven solution for prostate MRI reading support in action?

Transrectal ultrasound

Transrectal ultrasound (TRUS) is mostly used for guided biopsies; however, the application has also been deployed for initial lesion detection. The procedure is relatively straightforward: an ultrasound probe is inserted in the rectum and US images are obtained, and, in case of a biopsy, biopsy needles are simultaneously inserted into the prostate to obtain tissue samples.43,44

TRUS can be used to estimate prostate volume,45 as well as the detection and staging of lesions. Detection has been investigated since as early as the eighties; however, the potential seemed limited and conclusions included the expectancy of a high false positive rate.46 Other research describes varying detection rates for the different prostate zones, just like accuracy numbers, which also vary with different tumors sizes. Unfortunately, all-over results remained disappointing,47 leading to very little research on this topic since the nineties.

Deploying TRUS for the staging of prostate lesions has been reported as difficult, with meager performance at best.48,49 Different US techniques have been investigated (such as grayscale, color, Doppler power), however, none showed accuracy scores for different stages of cancer that justified extensive further research.43

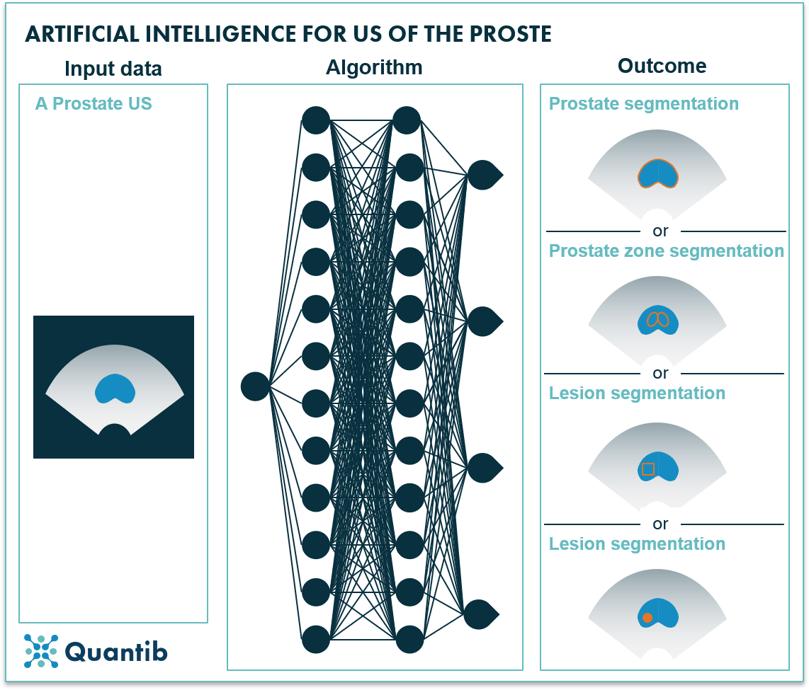

TRUS supported by AI

In the previous paragraph, we learned that obtaining insights from TRUS with the naked eye is not an easy task. Perhaps AI can offer support? There is definitely potential here. Automated prostate gland segmentation on TRUS images can provide the prostate volume in a faster and more objective way than manual measurements would. This can be of great benefit for PSA density calculations.19,50–52 Additionally, automated prostate zone segmentation has been investigated and shown to be feasible53 - an application that will add value in fusion-guided biopsy procedures. Detection and classification into cancerous and non-cancerous cases can be of great help during the diagnosis process. Academia has researched this option for application of AI sparingly and no valuable clinical applications have resulted.26,54–57 Additionally, lesion or ROI localization would be of tremendous value to support biopsies. AI-based methods have been investigated in the past for both single and multiparametric US and were proven to be able to detect prostate cancer as well as point out the clinically significant cases.58,59

Figure 14: Using an ultrasound image as input, an AI algorithm can determine prostate segmentation, prostate zone segmentation, lesion location or lesion segmentation.

PET

Position emission tomography (PET), in the context of prostate cancer diagnosis, is mostly used for cancer staging, tracking the effects of radiotherapy, and the detection of metastases. It is often combined with another modality, such as MRI or CT, to add the crucial anatomical information.

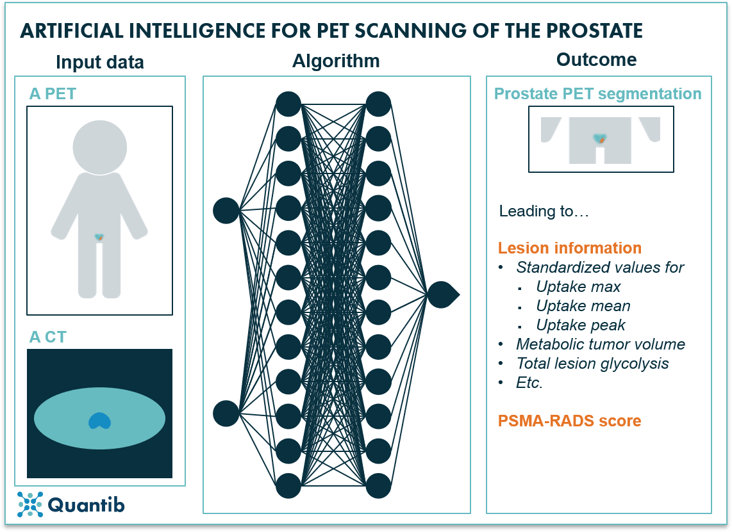

PET supported by AI

The field of artificial intelligence applied to PET for prostate cancer has not become very popular. Only little research was performed as PET is not the best suited modality for many relevant tasks in prostate cancer diagnosis. For example, segmentation of the prostate gland is often done using CT, as the anatomical information provided is significantly better than PET can offer. However, if a PET/CT is obtained, the CT scan can provide the prostate volume through gland segmentation, after which the PET scan can be registered to the CT and PET measurements in the prostate can support further diagnosis. Such tasks have been automated with the use of AI. Convolutional neural networks have been developed and tested for CT-based prostate gland segmentation and registration to PET and provided results similar to manual assessment.60 Additionally, lesion detection in dynamic PET images using AI has been investigated. Again, these researchers used CT images to detect the prostate gland and register this information to the PET image.61

The next step consists of providing a more detailed diagnosis by classifying prostate lesions using PET. It is, for example, possible to determine the PSMA-RADS score, or even predict disease progression.62 A Johns Hopkins research team leveraged AI for such tasks and trained a convolutional neural network to provide a PSMA-RADS score. Their study led to an overall accuracy of 67.4% compared to a ground truth created by four nuclear medicine physicians.63

Figure 15: AI algorithms can use a PET scan and a CT scan as input to determine a prostate gland segmentation on the PET scan, additionally providing lesion information and possibly a PSMA-RADS score.

What about other imaging modalities?

Radiologists have more methods at their disposal than the ones discussed so far. However, remaining methods are less suited for prostate cancer diagnosis. For example, CT is rarely used for (initial) diagnosis. More commonly, it is deployed preceding radiation therapy to determine bone landmarks for planning support, or to check the patient for metastases.64

Any other improvements AI can offer in prostate cancer imaging?

In addition to the AI methods described above, there is a myriad of other ways computers can support the radiology part of the diagnosis process. One example is improving image quality by applying algorithms that implement all sorts of improvements in the acquisition process. A very simple example is an algorithm that checks image quality immediately after scanning and determines whether it is up to diagnostic standards or if the scan should be repeated. Another example is an algorithm that is trained to improve image quality after acquisition, e.g. by optimizing k-space before transformation to an MR image,65 or realizing scan time reduction with an AI algorithm that augments noisy images.

Another area where AI can be of help is the standardization of images. This can be by either having an algorithm that aligns image acquisition across hospitals, or through an algorithm that adjusts the images after acquisition in such a way that the differences between scanners and settings are corrected for. Additionally, a strong registration algorithm should be able to determine slice orientations correctly, even if the patient was misaligned. An extended option is the standardization of image assessment by providing a standardized workflow including standardized reporting.

Pathology in prostate cancer

A standard procedure included in the prostate cancer diagnosis pathway is the acquisition of a biopsy. If an mpMRI was obtained and suspicious areas were detected, the samples can be collected either through cognitive or live imaging guidance. In other cases, generally 8, 10, or 12 tissue samples are collected from the prostate gland at bilateral sites.66 In the latter case, there is evidently a significant risk of missing the tumor if it is not located at a sample site.

After sample collection, the pathologist assesses the prostate gland tissue and determines a Gleason score. This score always consists of two numbers between 1 and 5, describing the two most occurring tissue types. On the scale, 1 refers to most normal tissue, while 5 refers to the most pathological. So, if a pathologist observes mostly normal and some of tissue type 2, the score will be 1+2 = 3. This means that a Gleason score of 4+3 = 7 is more severe than a 3+4 = 7 Gleason score.

Pathology supported by AI

It is not hard to see that AI can provide similar support in the pathology area, as we previously described in the imaging section. AI has been amply deployed to detect cancer in pathology images. Research has shown that algorithms are up to this task by providing impressive accuracy scores. Tolkach et al., for example, claimed an accuracy of 98% compared to the standard approach of detection by pathologists. Other researchers reported AUCs of 0.98 to 0.99.67–70

To make this Gleason score-setting algorithm less of a black box, a step in between can be letting the algorithm determine which areas in the sample show a certain Gleason pattern. In other words, the algorithm localizes and segments patches with the same Gleason score, creating a visual way for pathologists to assess the AI results.71

Prostate cancer treatment selection, planning, delivery and tracking

Once a diagnosis has been made and it is decided the patient should get treated for prostate cancer, a treatment can be selected, planned and set into motion. Subsequently delivery and tracking of the treatment are of utmost importance. How can AI support the different steps in this process?

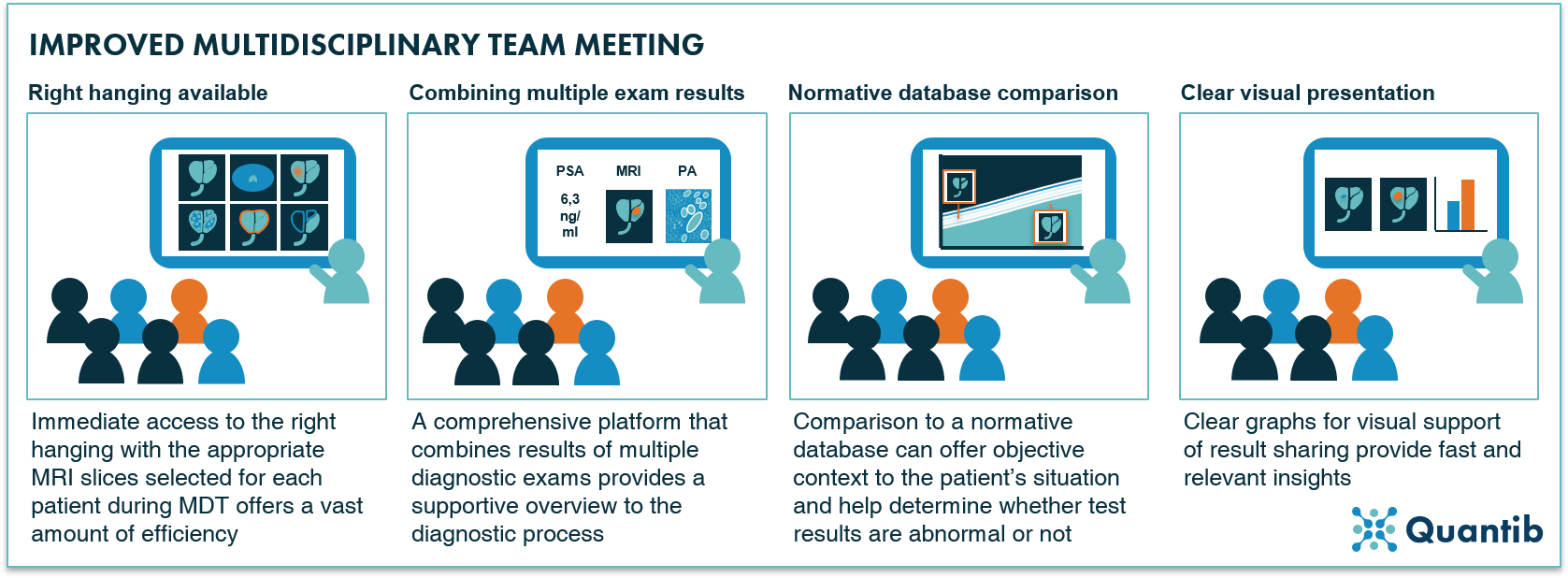

AI and prostate cancer treatment selection

The treatment selection process often includes a multidisciplinary team (MDT) meeting involving all physicians that have been part of the diagnostic process and all that might contribute to the treatment trajectory. How can AI be an addition to this already varied group of people? The high level outline of an MDT includes reviewing and discussing diagnostic results, weighing all treatment options and coming to a joint decision. All these steps can be supported by AI, for example by providing an all-round platform that automatically incorporates all results from the various examinations. At the same time such a platform can include insightful graphs which immediately communicate the main insights. This might be as simple as integrating the relevant slices of the prostate MRI images into the platform, removing the need for separate logins to PACS and on the spot hanging arrangements during the MDT. Additionally, an AI algorithm can display comparative data, such as normative curves, and present the patient’s data in the same graph, enabling comparison to a healthy population. Another option: use the hospital’s database to compare current patient to all previous prostate cancer suspects that this clinical facility has seen. While technically all AI analyses described in this paragraph are already possible, it may still be a long way until they are clinically available in their full glory, combined into a comprehensive solution.

Figure 16: Four examples of how AI can support a multidisciplinary team meeting.

Figure 16: Four examples of how AI can support a multidisciplinary team meeting.

A more exotic AI solution (and one that is most likely farther away in the future), is to have an algorithm select a treatment based on all input gathered during the diagnosis process.72 This algorithm would need to be trained using an extensive database containing huge amounts of information on all patients, including PSA score, acquired images, pathology results, and last but not least; ground truths of chosen treatment trajectory and the eventual outcome, i.e. effect on a patient’s health. Collecting such a database that is large enough to exclude the possibility of bias, will be far from an easy task. Additionally, training will require a lot of computation time and some serious GPUs, but it is possible to create such an algorithm that would advise the attending medical team about which treatment trajectory to start for a patient.

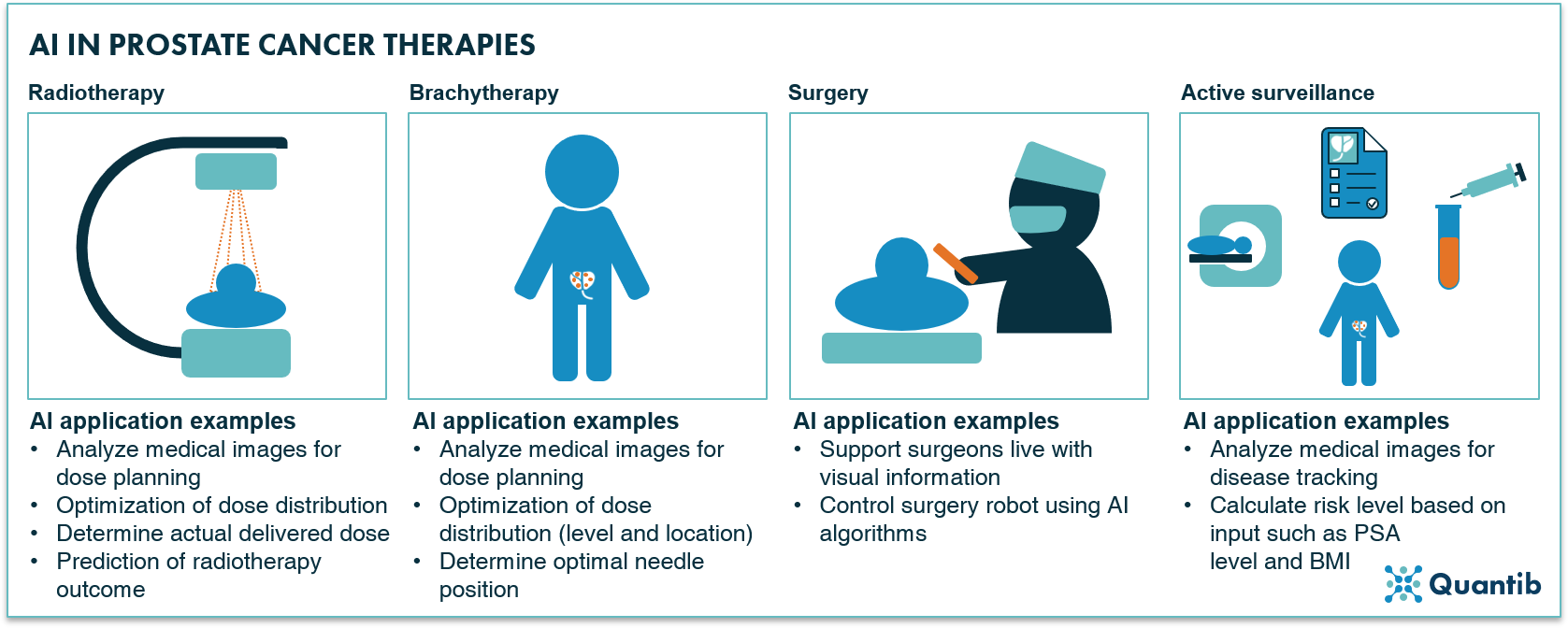

AI and prostate cancer treatment planning, delivery and tracking

After treatment selection and planning, the treatment should be planned in detail and tracked throughout the course of the therapy. The options AI offers to support depend on the type of treatment that is chosen. This section describes radiotherapy, brachytherapy, surgery, and active surveillance, and the way AI can complement to treatment processes involving these methods.

Radiotherapy

If a patient is scheduled to receive radiotherapy treatment for his diagnosed prostate cancer, a radiation therapist prepares a treatment plan. Such a treatment plan essentially has one goal; determine the optimal dose distribution both in location and time. An important input to this analysis is the delineation of the target volume and the organs at risk. To do so, MRI is a very suited imaging modality, as is CT. Hence, automated MRI- and CT-based segmentation can be of great help and is therefore widely discussed in literature.73 To learn more on prostate segmentation, please have a look at the corresponding section for MRI.

In addition to automated segmentation of the prostate gland (and volumes at risk), automation of the optimization of dosimetric trade-offs can be of great value in radiotherapy planning.74 AI has been widely leveraged to predict dose distributions in various organs amongst which head and neck and lung..75 Ma et al. used a deep learning approach to automate the calculation of the dose distribution for prostate cancer radiotherapy. Using only the planning target volume as input, the optimal dose volume histogram was determined and 98% of dosimetric endpoint checks were within the 10% error bound for selected organs at risk.76 A promising method for automated support of a task that can otherwise take hours. Besides focusing on dose distribution, AI can be leveraged to estimate the actual dose delivered to the patient. Deep neural networks have been investigated as a method to predict the actual delivered dose and found to be able to show excellent agreement with treatment planning dose maps.77 Eventually, the most interesting link to make is between treatment and outcome. Hence, outcome prediction is a much investigated area including focus on, for example, toxicity prognoses and, in case of prostate cancer, the risk of urinary symptoms such as incontinence.78,79

Brachytherapy

A specific form of radiation therapy is brachytherapy in which radioactive sources are implanted into the prostate, close to the lesion, to allow for a very located radiation dosage. As this type of treatment requires a detailed treatment plan in which many factors play a role, AI offers a range of opportunities. Medical imaging usually is the starting point of brachytherapy treatment planning. Hence, tools that support automated medical imaging analysis (such as organ or lesion segmentation on MRI, CT or TRUS) can provide relevant input for an accurate and precise treatment plan.80 A subsequent step suitable for AI to provide support, is the creation of dosage plans. Nicolae et al. trained a machine learning algorithm for the prediction of source patterns that come close to historic planning strategies. A significant improvement in required planning time was accomplished (almost 18 min for manual planning versus less than a minute for machine learning supported planning), while quality of the plans was comparable to those created by experts.81 Additional benefits of leveraging AI for treatment plan creation include possibly saving on the required number of seeds,82 determining optimal needle positioning, and dwell time determination. All possibly with more consistent quality than manual determination of these factors can offer.83

Surgery

Next to radiation therapy, surgery is a fairly common treatment procedure for prostate cancer with the most occurring option being radical prostatectomy.84 What can AI contribute in the operating room to increase operation success rate? One futuristic, but technically not unrealistic, option is to train artificial intelligence algorithms that can augment the surgeon’s display during the procedure, e.g. by displaying the MRI or CT images directly on the surgical bed (in a way similar to augmented reality). This would be a useful application for the prostate as well as the lymph nodes and other critical structures. Such algorithms can provide real time, visual information on, for example, cancer localization. Think of it as a live prostate projection including lesion location, location of the needles and perhaps additional real time measurements. One step further is feeding all this information to a surgery robot that can adjust the course of the procedure automatically while executing.85 With a well-trained AI algorithm, such a robot can have access to the expertise of hundreds of specialized surgeons.

Active surveillance

Sometimes no treatment, at least not right now, is the best way forward. Hence, active surveillance is another frequently applied approach in prostate cancer cases. Of course, it is of utmost importance to keep a close eye on these patients and track whether a more active therapy should be started, because the tumor is growing (more rapidly). Especially since patients selected for active surveillance typically have very small tumors which grow slowly, having support in detecting tiny changes can add significant value. Fortuitously, AI can be of great help in this area.

It is not surprising that active surveillance leverages the same methods as the initial diagnosis trajectory of prostate cancer does. Imaging can play a big role, hence, previous discussed options of AI to support the acquisition and interpretation of prostate images are for the largest part also relevant in the context of active surveillance.86

However, many more options to track and guide patients are on the table. An example is the Pass Risk Calculator, which uses input such as previous biopsy results, PSA levels and BMI to calculate a patient risk profile for next 5 years. It is not hard to imagine that the performance of such a tool can be dramatically improved by training a deep learning-based algorithm on a large database and include many more factors than the current Pass Risk Calculator does.87

Other therapies: cryotherapy, hormone therapy, chemo therapy, immunotherapy, HIFU

Then there is a whole list of other possible, less applied, therapies. Cryotherapy, basically the freezing of the cancerous tissue in the prostate using gas inserted through needles, is mostly deployed in patients suffering from early stage prostate cancer.88 The application of AI to cryotherapy in prostate cancer has not been investigated, however, one could think of AI-based temperature predictions that could support and advance the treatment procedure.89 Additionally, research focused on applying high intensity focused ultrasound (HIFU) showed promising results.90 Leveraging AI to improve HIFU results has not been investigated yet, but similarly to cryotherapy, AI-based temperature prediction could be of great value in HIFU treatment trajectories. Additionally, similar applications as discussed in previous paragraphs about other therapies would be interesting to investigate, for example, when combining (in bore) HIFU with real time imaging, lesion contouring has the potential to substantially improve ablation accuracy.

Other therapies include hormone therapy, chemo therapy and immunotherapy. Research on AI application in the context of these types of therapies has been very limited to nonexistent. Nonetheless, with the right data base including patient data, therapy details and registration of the course of the disease, possible angles for research could include the prediction of therapy response and side effects. As these therapies are done often in metastatic patients, AI could be of use in tracking patients with many metastatic lesions where manual monitoring would be very tedious.

Figure 17: Radiotherapy, brachytherapy, surgery and active surveillance are amongst the most applied therapies for prostate cancer with a wide range of options for application of AI-based support.

AI and post-treatment follow-up

After the treatment has been ended, there are many ways to keep track of the status of a patient. Most of those coincide with methods described in earlier paragraphs about the diagnosis trajectory. For example, periodic PSA tests and imaging exams are possibly included in post-treatment follow-up. Hence, AI-based options for support during this stage are comparable to the those mentioned in the diagnosis chapter.

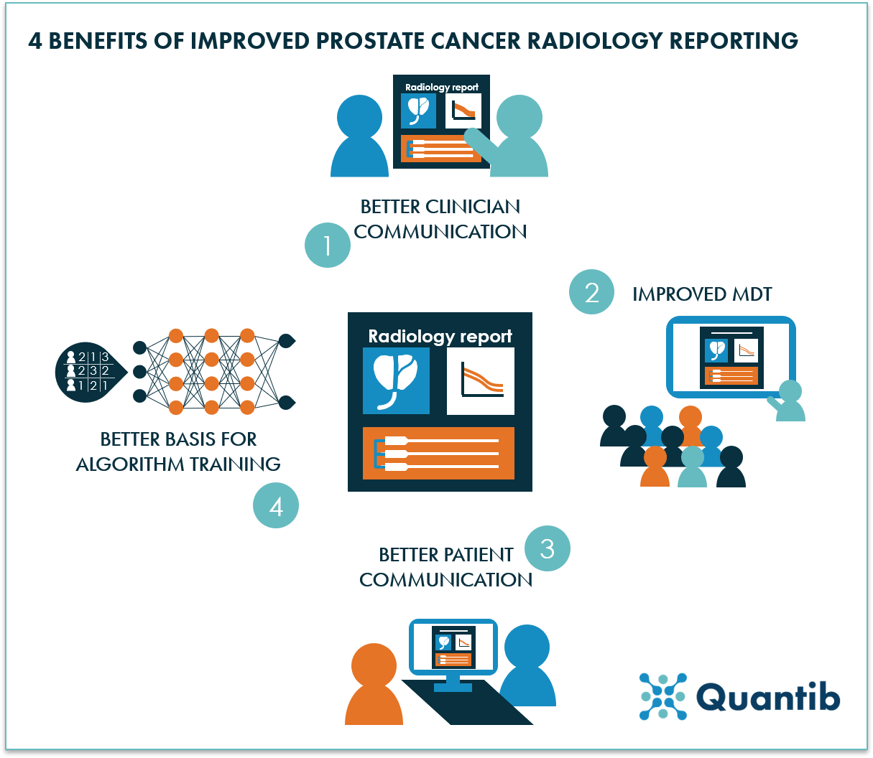

AI and prostate cancer reporting

Besides providing many options for support of reading medical images and contributing to the analysis of other types of diagnostic tests, AI is able to streamline and bolster the reporting of radiologists, urologists and pathologists. It allows for inclusion of quantified results in reports, possibly compared to normative databases, so that physicians have a strong grasp of the wider prostate cancer context and how their patient relates to this comparative data. Additionally, AI is a great method to leverage for standardized reporting. Standardized reporting will help radiologists to structure the way they build reports, i.e. radiologists are guided through specific steps so that reports from one radiologist will be extremely similar to those of other radiologists. In other words, this method will decrease intra-observer variability. This application does not require the most advanced AI, however, having some smart algorithms deciding which (text) fields are relevant and should therefore pop up, based on information submitted in previous fields, can make the reporting process easier, more complete and more personalized. Another advantage of using AI for prostate cancer reporting strongly relates to the standardized reporting benefit, namely the opportunity to build a complete and consistent database. Such a database can help gain more insight in disease development, treatment response, etc, for example through academic research. However, it can also provide valuable input for the training of prospective AI algorithms.

Other benefits of using AI for reporting can be found in the previously mentioned MDT support through a universal platform incorporating all test results, faster reporting from radiologist to clinician, but also improved clinician – patient reporting by having insightful, AI-generated graphs and information ready to support conversations with a patient, and surely quite some we have not mentioned here.

Figure 18: Four examples of benefits that can be realized by optimized prostate cancer reporting.

In conclusion

We have discussed a wide variety of options to apply AI in the prostate cancer pathway. It is obvious that many encouraging opportunities lie ahead. Some may be the obvious ones to jump to mind when thinking about AI in healthcare, such as automated lesion detection support on medical images or in PA results. Others are possibly less straightforward, but can nonetheless add substantial value. Examples can be found in needle location optimization for brachytherapy or smart ways to improve efficiency during multidisciplinary team meetings.

Of all examples discussed on this page some have already been researched and are on their way to clinical practice or are even available already to benefit patients right now. Others still need to be investigated to determine the value they might be able to add in the future.

Curious about Quantib's AI driven solution to advance the prostate cancer pathway?

Bibliography

- Gao, S. et al. Unsupervised clustering reveals new prostate cancer subtypes. Transl. Cancer Res. 6, 561–572 (2017).

- Ankerst, D. P. & Thompson, I. M. Sensitivity and specificity of prostate-specific antigen for prostate cancer detection with high rates of biopsy verification. Arch Ital Urol Androl 78, 125–129 (2006).

- Sanchez-chapado, M., Angulo, J. C., Garcia-segurae, J. M., Gonzalez-esteban, J. & Vallejo, J. M. R.-. Comparison of Digital Rectal Examination, Transrectal Ultrasonography, and Multicoil Magnetic Resonance Imaging for Preoperative Evaluation of Prostate Cancer. Eur. Urol. 32, 140–149 (1997).

- Stephan, C. et al. Multicenter Evaluation of an Artificial Neural Network to Increase the Prostate Cancer Detection Rate and Reduce Unnecessary Biopsies. Clin. Chem. 48, 1279–1287 (2002).

- N. Mottet, P. Cornford, R.C.N. van den Bergh, E. Briers, M. De Santis, S. Fanti, S. Gillessen, J. Grummet, A.M. Henry, T.B. Lam, M.D. Mason, T.H. van der Kwast, H.G. van der Poel, O. Rouvière, I.G. Schoots, D., T. W. Prostate cancer. Available at: https://uroweb.org/guideline/prostate-cancer. (Accessed: 17th November 2020)

- Andrew B. Rosenkrantz, M. et al. ACR - Prostate MRI Model Policy. Available at: https://www.acr.org/-/media/ACR/Files/Advocacy/AIA/Prostate-MRI-Model-Policy72219.pdf. (Accessed: 7th May 2020)

- Xu, L. et al. Comparison of biparametric and multiparametric MRI in the diagnosis of prostate cancer. Cancer imaging 19, (2019).

- Rhiannon van Loenhout, Frank Zijta, Robin Smithuis, I. S. Prostate Cancer - PI-RADS v2. Available at: https://radiologyassistant.nl/abdomen/prostate/prostate-cancer-pi-rads-v2. (Accessed: 17th November 2020)

- Cheng, P. Ellipsoid Volume Calculator. Available at: https://pcheng.org/calc/ellipsoid.html. (Accessed: 17th November 2020)

- Volume, Dimension & Density. Available at: https://www.mskcc.org/nomograms/prostate/volume. (Accessed: 17th November 2020)

- Bezinque, A. et al. Determination of Prostate Volume: A Comparison of Contemporary Methods. Acad. Radiol. 25, 1582–1587 (2016).

- Rundo, L. et al. Automated Prostate Gland Segmentation Based on an Unsupervised Fuzzy C-Means Clustering Technique Using Multispectral T1w and T2w MR Imaging. Information 8, (2017).

- Lee, D. K. et al. Three-Dimensional Convolutional Neural Network for Prostate MRI Segmentation and Comparison of Prostate Volume Measurements by Use of Artificial Neural Network and Ellipsoid Formula. Am. J. Roentgenol. 214, 1229–1238 (2020).

- Karimi, D., Samei, G., Kesch, C., Nir, G. & Salcudean, S. E. Prostate segmentation in MRI using a convolutional neural network architecture and training strategy based on statistical shape models. Int. J. Comput. Assist. Radiol. Surg. 13, 1211–1219 (2018).

- Tian, Z. PSNet: prostate segmentation on MRI based on a convolutional neural network PSNet : prostate segmentation on MRI based on a convolutional neural network. J. Med. Imaging 5, (2018).

- Clark, T., Wong, A. & Haider, M. A. Fully Deep Convolutional Neural Networks for Segmentation of the Prostate Gland in Diffusion-Weighted MR Images. ICIAR 97–104 (2017). doi:10.1007/978-3-319-59876-5

- Zhu, Q., Qikui, Z. & Du, B. Deeply-Supervised CNN for Prostate Segmentation. IEEE 178–184 (2017).

- Milletari, F. V-Net: Fully Convolutional Neural Networks for Volumetric Medical Image Segmentation. in Fourth International Conference on 3D Vision 565–573 (2016). doi:10.1109/3DV.2016.79

- Wang, B. et al. Deeply supervised 3D fully convolutional networks with group dilated convolution for automatic MRI prostate segmentation. Int. J. Med. Phys. Res. Pract. 46, 1707–1718 (2019).

- Cheng, R. et al. Active Appearance Model and Deep Learning for More Accurate Prostate Segmentation on MRI. Med. Imaging 9784, 1–9 (2016).

- Steenbergen, P. et al. Prostate tumor delineation using multiparametric magnetic resonance imaging: Inter-observer variability and pathology validation. Radiother. Oncol. 115, 186–190 (2015).

- Nobin, J. Le, Orczyk, C., Deng, F. & Melamed, J. Prostate tumour volumes: evaluation of the agreement between magnetic resonance imaging and histology using novel co-registration software. BJU Int. 114, 105–112 (2014).

- Lay, N. et al. Detection of prostate cancer in multiparametric MRI using random forest with instance weighting. J. Med. Imaging 4, (2017).

- Liu, X. et al. Prostate Cancer Segmentation With Simultaneous Estimation of Markov Random Field Parameters and Class. IEEE Trans. Med. Imaging 28, 906–915 (2009).

- Kohl, S. et al. Adversarial Networks for the Detection of Aggressive Prostate Cancer. in MICCAI (2017).

- Dai, Z. et al. Segmentation of the Prostatic Gland and the Intraprostatic Lesions on Multiparametic MRI Using Mask-RCNN. Adv. Radiat. Oncol. 5, 473–481 (2015).

- Kai, Z. et al. Prostate cancer identification: quantitative analysis of T2-weighted MR images based on a back propagation artificial neural network model. Sci. China Life Sci. 58, 666–673 (2015).

- Bernatz, S. et al. Comparison of machine learning algorithms to predict clinically significant prostate cancer of the peripheral zone with multiparametric MRI using clinical assessment categories and radiomic features. Eur. Radiol. 30, 6757–6769 (2020).

- Bonekamp, D., Kohl, S., Wiesenfarth, M., Schelb, P. & Philipp, J. Radiomic Machine Learning for Characterization of Prostate Lesions with MRI: Comparison to ADC Values. Radiology 289, 128–137 (2018).

- Aldoj, N., Lukas, S., Dewey, M. & Dewey, M. Semi-automatic classification of prostate cancer on multi-parametric MR imaging using a multi-channel 3D convolutional neural network. Eur. Radiol. 30, 1243–1253 (2019).

- Chen, Q. et al. A Transfer Learning Approach for Malignant Prostate Lesion Detection on Multiparametric MRI. Tech 18, 1–9 (2019).

- Schelb, P., Kohl, S., Radtke, J. P. & Wiesenfarth, M. Classification of Cancer at Prostate MRI: Deep Learning versus Clinical PI-RADS Assessment. Radiology 293, (2019).

- Adrian, O. Z. et al. Segmentation of prostate and prostate zones using deep learning. Strahlentherapie und Onkol. 196, 932–942 (2020).

- Liu, Y. et al. Automatic Prostate Zonal Segmentation Using Fully Convolutional Network with Feature Pyramid Attention. IEEE Access 7, (2019).

- Zhu, Y. et al. Fully Automatic Segmentation on Prostate MR Images Based on Cascaded Fully Convolution Network. J. Magn. Reson. Imaging 49, 1149–1156 (2018).

- Giannini, V. et al. A fully automatic computer aided diagnosis system for peripheral zone prostate cancer detection using multi-parametric magnetic resonance imaging. Comput. Med. Imaging Graph. 46, Pages 219-226 (2015).

- Antonelli, M. et al. Machine learning classifiers can predict Gleason pattern 4 prostate cancer with greater accuracy than experienced radiologists. Eur. Radiol. 43–45 (2019).

- Sanford, T. et al. Deep-Learning-Based Arti fi cial Intelligence for PI-RADS Classifi cation to Assist Multiparametric Prostate MRI Interpretation: A Development Study. J. Magn. Reson. Imaging 52, 1499–1507 (2020).

- Id, J. T. et al. Radiomics and machine learning of multisequence multiparametric prostate MRI: Towards improved non-invasive prostate cancer characterization. PLOS | ONE | Mach. Learn. prostate cancer Charact. (2019).

- Citak-er, F. et al. Final Gleason Score Prediction Using Discriminant Analysis and Support Vector Machine Based on Preoperative Multiparametric MR Imaging of Prostate Cancer at 3T. Biomed Res. Int. (2014).

- Abdollahi, H. et al. Machine learning ‑ based radiomic models to predict intensity ‑ modulated radiation therapy response , Gleason score and stage in prostate cancer. Radiol. Med. 124, 555–567 (2019).

- Winkel, D. J. et al. Predicting clinically significant prostate cancer from quantitative image features including compressed sensing radial MRI of prostate perfusion using machine learning : comparison with PI- RADS v2 assessment scores. Quant. Imaging Med. Surg. 10, 808–823 (2020).

- Tangel, M. R. & Rastinehad, A. R. Advances in prostate cancer imaging. F1000Research 7, (2018).

- Hwang, S. Il & Lee, H. J. The future perspectives in transrectal prostate ultrasound guided biopsy. Prostate Int. 2, 153–160 (2014).

- Aprikian, S. et al. Improving ultrasound-based prostate volume estimation. BMC Urol. 19, 1–8 (2019).

- Carter, H. B. et al. Evaluation of transrectal ultrasound in the early detection of prostate cancer. J. Urol. 142, 1008–1010 (1989).

- Terris, M. K., Freiha, F. S., McNeal, J. E. & Stamey, T. A. Efficacy of transrectal ultrasound for identification of clinically undetected prostate cancer. J. Urol. 146, 78–84 (1991).

- Onur, R., Littrup, P. J., Pontes, J. E. & Jr, F. J. B. Contemporary impact of transrectal ultrasound lesions for prostate cancer detection. J. Urol. 172, 512–514 (2004).

- Harvey, C. J., Pilcher, J., Richenberg, J., Patel, U. & Frauscher, F. Applications of transrectal ultrasound in prostate cancer. Br. J. Radiol. 85, 3–17 (2012).

- Kachouie, N. N. & Fieguth, P. A Medical Texture Local Binary Pattern For TRUS Prostate Segmentation. in Proceedings of the 29th Annual International Conference of the IEEE EMBS 5605–5608 (2007).

- Tutar, I. B. et al. Semiautomatic 3-D Prostate Segmentation from TRUS Images Using Spherical Harmonics. 25, 1645–1654 (2006).

- Badiei, S., Salcudean, S. E., Varah, J. & Morris, W. J. Prostate Segmentation in 2D Ultrasound Images Using Image Warping and Ellipse Fitting. in MICCAI 17–24 (2006).

- Sloun, R. J. G. Van et al. Deep Learning for Real-time, Automatic , and Scanner-adapted Prostate (Zone) Segmentation of Transrectal Ultrasound, for Example, Magnetic Resonance Imaging – transrectal Ultrasound Fusion Prostate Biopsy. Eur. Urol. Focus 1–8 (2019). doi:10.1016/j.euf.2019.04.009

- Loch, T. et al. Artificial Neural Network Analysis (ANNA) of Prostatic Transrectal Ultrasound. Prostate 39, 198–204 (1999).

- Pareek, G. et al. Prostate Tissue Characterization/Classification in 144 Patient Population Using Wavelet and Higher Order Spectra Features from Transrectal Ultrasound Images. Technol. Cancer Res. Treat. 12, (2013).

- Mohamed, S. S., Salama, M. M. A., Kamel, M., El-Saadany, E. F. & K Rizkalla, J. C. Prostate cancer multi-feature analysis using trans-rectal ultrasound images. Phys. Med. Biol. 50, 175–185 (2005).

- Lee, H. J. et al. Image-based clinical decision support for transrectal ultrasound in the diagnosis of prostate cancer : comparison of multiple logistic regression , artificial neural network , and support vector machine. Urogenital 20, 1476–1484 (2009).

- Mohamed, S. S., Li, J., Salama, M. M. A. & Freeman, G. Prostate Tissue Texture Feature Extraction for Suspicious Regions Identification on TRUS Images. J. Digit. Imaging 22, 503–518 (2009).

- Wildeboer, R. R. et al. Automated multiparametric localization of prostate cancer based on B-mode, shear-wave elastography, and contrast-enhanced ultrasound radiomics. Europ 30, 806–815 (2019).

- Mortensen, M. A. et al. Artificial intelligence-based versus manual assessment of prostate cancer in the prostate gland: a method comparison study. Clin. Phyiologycal Funct. Imaging 1–8 (2019). doi:10.1111/cpf.12592

- Rubinstein, E. et al. Unsupervised tumor detection in Dynamic PET/CT imaging of the prostate. Med. Image Anal. 55, 27–40 (2019).

- Alongi, P., Stefano, A., Comelli, A., Barone, R. L. S. & Russo, G. New Artificial intelligence model for 18F-Choline PET/CT in evaluation of high-risk prostate cancer outcome: texture analysis and radiomics features classification for prediction of disease progression. J. Nucl. Med. 61, (2020).

- Ridley, E. L. SNMMI 2020: AI analysis of PET could help classify prostate cancer. Aunt Minnie (2020). Available at: https://www.auntminnie.com/index.aspx?sec=sup&sub=aic&pag=dis&ItemID=129611. (Accessed: 17th November 2020)

- Yusra Sheikh & Saqba Farooq, et al. Prostate cancer. Available at: https://radiopaedia.org/articles/prostate-cancer-3. (Accessed: 17th November 2020)

- Lin, D. J., Johnson, P. M., Knoll, F. & Lui, Y. W. Artificial Intelligence for MR Image Reconstruction: An Overview for Clinicians. 1–14 (2020). doi:10.1002/jmri.27078

- Mottet, N. et al. European Association of Urology - Prostate Cancer. 2020 Available at: https://uroweb.org/guideline/prostate-cancer/. (Accessed: 7th May 2020)

- Tolkach, Y., Toma, M. & Kristiansen, G. High-accuracy prostate cancer pathology using deep learning. Nat. - Mach. Intell. 2, 411–418 (2020).

- Campanella, G. et al. Terabyte-scale Deep Multiple Instance Learning for Classification and Localization in Pathology. arXiv (2018).

- Litjens, G. et al. Deep learning as a tool for increased accuracy and efficiency of histopathological diagnosis. Nat. - Sci. Reports 6, (2016).

- Pantanowitz, L. et al. Articles An artificial intelligence algorithm for prostate cancer diagnosis in whole slide images of core needle biopsies : a blinded clinical validation and deployment study. Lancet Digit. Heal. 2, 407–416 (2020).

- Nagpal, K. et al. Development and validation of a deep learning algorithm for improving Gleason scoring of prostate cancer. NPJ Digit. Med. 2, (2019).

- Hyeon, S. et al. Early experience with Watson for Oncology : a clinical decision‑support system for prostate cancer treatment recommendations. World J. Urol. (2020). doi:10.1007/s00345-020-03214-y

- Almeida, G. & Tavares, J. M. Deep Learning in Radiation Oncology Treatment Planning for Prostate Cancer: A Systematic Review. J. Med. Syst. 44, (2020).

- Moore, K. L. Automated Radiotherapy Treatment Planning. Semin. Radiat. Oncol. 29, 209–218 (2019).

- Francolini, G. et al. Artificial Intelligence in radiotherapy: state of the art and future directions. Med. Oncol. 37, (2020).

- Ma, M., Kovalchuk, N., Buyyounouski, M. K., Xing, L. & Yang, Y. Dosimetric Features-Driven Machine Learning Model for DVHs Prediction in VMAT Treatment Planning. Int. J. Med. Phys. Res. Pract. 46, 857–867 (2018).

- Mahdavi, S. R. et al. Use of artificial neural network for pretreatment verification of Intensity Modulation Radiation Therapy fields. Br. Insititute Radiol. 92, (2019).

- Lee, S. et al. Machine Learning on a Genome-Wide Association Study to Predict Late Genitourinary Toxicity Following Prostate Radiotherapy. Int. J. Radiat. Oncol. • Biol. • Phys. 101, 128–135 (2018).

- Carrara, M. et al. Development of a Ready-to-Use Graphical Tool Based on Artificial Neural Network Classification : Application for the Prediction of Late Fecal Incontinence After Prostate Cancer Radiation Therapy. Int. J. Radiat. Oncol. • Biol. • Phys. 1–10 (2018). doi:10.1016/j.ijrobp.2018.07.2014

- Nouranian, S., Ramezani, M., Spadinger, I., Morris, W. J. & Salcudean, S. E. Learning-Based Multi-Label Segmentation of Transrectal Ultrasound Images for Prostate Brachytherapy. IEEE Trans. Med. Imaging 35, 921–932 (2016).

- Nicolae, A. et al. Evaluation of a machine-learning algorithm for treatment planning in prostate Low Dose-Rate brachytherapy. Int. J. Radiat. Oncol. • Biol. • Phys. (2016). doi:10.1016/j.ijrobp.2016.11.036

- Boussion, N., Valeri, A., Malhaire, J. & Visvikis, D. Predicting the number of seeds in ldr prostate brachytherapy using machine learning and 320 patients. in ESTRO 127, S477–S478 (Elsevier Masson SAS, 2018).

- Schieszer, J. Brachytherapy, AI Could Improve Prostate Cancer. (2020). Available at: https://www.renalandurologynews.com/home/news/urology/prostate-cancer/artificial-intelligence-could-improve-prostate-cancer-brachytherapy/. (Accessed: 17th November 2020)

- Lowrance, W. T. et al. Contemporary Open and Robotic Radical Prostatectomy Practice Patterns Among Urologists in the United States. J. Urol. 187, 2087–2093 (2012).

- Goldenberg, S. L., Nir, G. & Salcudean, S. A new era: artificial intelligence and machine learning in prostate cancer. Nat. Rev. Urol. 16, (2019).

- Porreca, A., Colicchia, M., Busetto, G. M. & Ferro, M. MRI and Active Surveillance for Prostate Cancer. Diagnostics 10, 590–593 (2020).

- Progression Calculator. Available at: https://canarypass.shinyapps.io/progression_calculator/. (Accessed: 17th November 2020)

- Cryotherapy for Prostate Cancer. Available at: https://www.hopkinsmedicine.org/health/conditions-and-diseases/prostate-cancer/cryotherapy-for-prostate-cancer. (Accessed: 18th November 2020)

- Rashkovska, A., Kocev, D. & Trobec, R. Non-invasive real-time prediction of inner knee temperatures during therapeutic cooling. Comput. Methods Programs Biomed. 1–13 (2015). doi:10.1016/j.cmpb.2015.07.004

- Andre Luis Abreu, Samuel Peretsman, Atsuko Iwata, Aliasger Shakir, Tsuyoshi Iwata, Jessica Brooks, Alessandro Tafuri, Akbar Ashrafi, Daniel Park, Giovanni E. Cacciamani, Masatomo Kaneko, Vinay Duddalwar, Manju Aron, Suzanne Palmer, and I. S. G. High Intensity Focused Ultrasound Hemigland Ablation for Prostate Cancer: Initial Outcomes of a United States Series. J. Urol. 204, 741–747 (2020).